MLGWSC-1

Results from the first mock data challenge for machine learning gravitational-wave search algorithms

An international team of researchers led by a PhD student at the AEI Hannover has pitted six different algorithms, four of them machine learning methods, against each other in a friendly competition. The goal of the exercise was to determine how well such algorithms perform in the search for gravitational-wave signals from binary black hole mergers. To find out, the team created a mock data challenge: six participating research groups submitted their search algorithms, which where then used to identify gravitational-wave signals hidden in four data sets of an increasing complexity and degree of realism. The study shows that these algorithms – within the limits of this challenge – are competitive to current state-of-the-art searches. Additionally, they have low computational cost and allow for a trivial implementation of the algorithms on highly efficient graphics processing units. The team identified three key areas of research to realize the full potential of machine learning algorithms in gravitational-wave detection pipelines.

Paper abstract

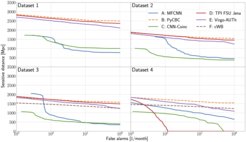

We present the results of the first Machine Learning Gravitational-Wave Search Mock Data Challenge (MLGWSC-1). For this challenge, participating groups had to identify gravitational-wave signals from binary black hole mergers of increasing complexity and duration embedded in progressively more realistic noise. The final of the 4 provided datasets contained real noise from the O3a observing run and signals up to a duration of 20 sseconds with the inclusion of precession effects and higher order modes. We present the average sensitivity distance and runtime for the 6 entered algorithms derived from 1 month of test data unknown to the participants prior to submission. Of these, 4 are machine learning algorithms. We find that the best machine learning based algorithms are able to achieve up to 95% of the sensitive distance of matched-filtering based production analyses for simulated Gaussian noise at a false-alarm rate (FAR) of one per month. In contrast, for real noise, the leading machine learning search achieved 70%. For higher FARs the differences in sensitive distance shrink to the point where select machine learning submissions outperform traditional search algorithms at FARs ≥200 per month on some datasets. Our results show that current machine learning search algorithms may already be sensitive enough in limited parameter regions to be useful for some production settings. To improve the state-of-the-art, machine learning algorithms need to reduce the false-alarm rates at which they are capable of detecting signals and extend their validity to regions of parameter space where modeled searches are computationally expensive to run. Based on our findings we compile a list of research areas that we believe are the most important to elevate machine learning searches to an invaluable tool in gravitational-wave signal detection.